In my previous post I mentioned that I had set up Microsoft’s SAPI to implement voice control of my lights. For the most part, it works well with the tweaks I did with the grammar file, except that you have to train your speech. For what I want to accomplish that will be a large issue. I want anyone to be able to walk into my house and use the system. It should be a hands free system that doesn’t make turning the lights on more complicated with having to use something like your phone (but that too, can be an option). I have been playing with the idea of using RFID/NFC but it still would involve always having your phone around; also, not everyone has a NFC enabled phone. So hopefully speech recognition is the answer. I did some searching for a good microphone that was good for large areas like an array microphone or something. Many of the prices were out of my budget. Plus, I’d still have the training problem. Then I had a thought, I have an array microphone already in the living room: the Kinect!

Now with the Kinect SDK 1.5 installed and the Kinect connected it’s time to get down and dirty with the code. This will be my first time playing with the Kinect SDK other than trying some demos with the OpenNI drivers. This was the one I tired if you have one and want to try: http://reconstructme.net/. Pretty cool stuff. I found a few examples of using the speech engine in Visual Studio 2010 in VB.NET that would help a lot, you can find them here:

VB Demo:

http://blogs.msdn.com/b/vbteam/archive/2011/06/16/kinnect-sdk-for-pc-vb-samples-available.aspx

Kinect Downloads

http://www.microsoft.com/en-us/kinectforwindows/develop/developer-downloads.aspx

Speech SDK v11

http://go.microsoft.com/fwlink/?LinkID=240081

What I have found it is mostly is done in Windows Presentation Foundation(WPF) instead of Winforms. From what I read, it is better for graphical intensive applications. The development toolkit that you can download with the SDK is all in WPF, with examples in multiple languages.

The first example I tried listed above, uses a console application to detect a few spoken colors and shows if it was accepted or not. The problem with this demo is, it was created with the Beta SDK. In 1.0+ they changed the name conventions. Originally, you imported like this:

Imports Microsoft.Research.Kinect

to

Imports Microsoft.Kinect

among other changes in naming conventions. I found this, Migration Steps that has a migration library to help you convert it. After a few hours I finally got it to run with SDK 1.5.

I started with the original demo I converted but ended up using most of the code from the development kit examples and removing the original demo code. With minimal effort, I was able to get it to run in WinForms. I like the development kit example much better because it shows how to use a XML file for the grammar data and breaks up initialization into separate functions which made it easy to read and follow how it all comes together.

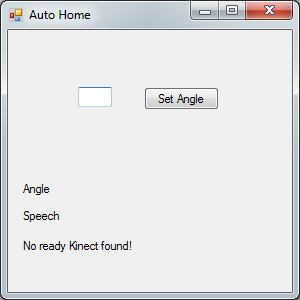

Here is what I came up with to test it in WinForms with the words I would be using:

|

| VB.Net Kinect Speech Application |

I also added control of the Kinect motor and the angle of where it thinks you are in the room!

Overall it wasn’t too hard to learn and I am really happy with the results. The speech recognition works really well and I haven’t even touched the other possibilities with the other sensors on the Kinect. In the meantime I have been trying to find a Kinect sensor for cheap on Craigslist instead of dragging it from the living room, but no one ever emailed me back. Thankfully my mom emailed me this morning with a deal of the day from Best Buy for a Kinect for $99. What timing!

Edit:

While finishing this post and looking for the links I realized something. I can use the MS Speech Platform SDK 11 without a Kinect instead of SAPI 5.x that I am using in the first application. I might have to try how well it works without the Kinect array microphones, which would be cheaper but probably not as good as I would like.